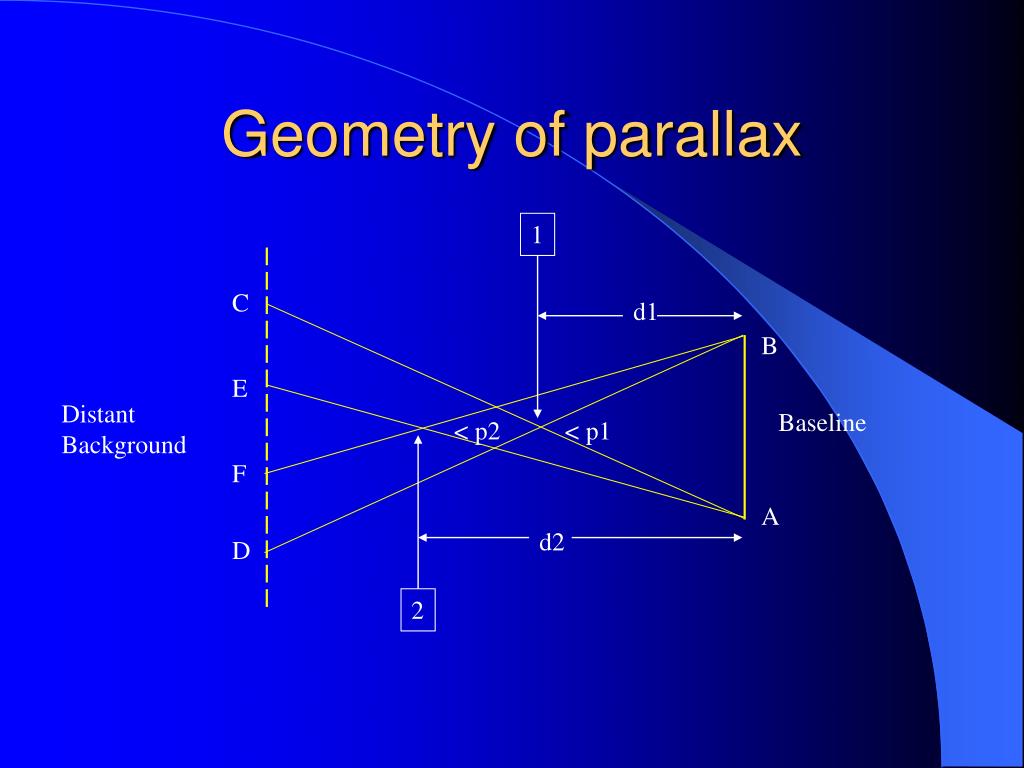

1), one would need to use only the component of image motion related to self-motion, v self, while ignoring the component of image motion due to the object’s motion in the world, v obj. To correctly compute the depth of the moving car from motion parallax using the motion-pursuit law (Eq. 1a, there is a component of image motion related to the car’s motion in the world, v obj, and a component of image motion related to the observer’s translation and the car’s location in depth, v self (Fig. Judging depth of a moving object during self-motion is substantially more challenging, as the retinal image velocity, v ret, depends on both scene-relative object motion and self-motion (Fig. Thus, the relative depth of stationary objects can be computed from their image motion if eye velocity relative to the scene is also known.Īlthough much has been learned about how humans perceive depth from motion parallax 7, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, previous studies have not considered situations in which the object of interest may be moving in the world. Mathematically, relative depth is given by the motion-pursuit law 12, which can be approximated for small angles as: In this situation, a stationary object’s depth relative to the fixation plane, \(d\), is determined by the retinal image velocity, \(d\theta /dt\), eye rotation velocity relative to the scene, \(d\alpha /dt\), and the fixation distance, \(f\) (Fig. After taking into account image inversion due to the lens of the eye, stationary objects that are nearer than the point of fixation (traffic light) would have rightward image motion, whereas stationary objects that are farther than the traffic light would have leftward image motion (Fig.

1a, when an observer maintains visual fixation on the traffic light while moving to the right, all of the stationary objects in the scene have an image velocity that is determined by their 3D location and the movement of the observer. Regarding depth perception from motion parallax, stationary objects at varying depths have different retinal image velocities during lateral self-translation. Moreover, the brain can estimate depth with greater precision by integrating disparity and motion parallax cues 9, 10, 11. Robust monocular depth perception can also be attained from motion parallax, the image motion induced by translational self-motion 6, 7, 8. Stereoscopic depth perception relies on slight differences between the retinal images projected onto the two eyes, known as binocular disparity 4, 5. The visual system has evolved multiple mechanisms to judge the depth of objects in a 3D scene 1, 2, 3. To make sense of the 3D structure of the visual scene, the brain must also accurately infer the depths of moving objects. Many situations, some critical to an animal’s survival such as hunting prey, require the brain to correctly identify moving objects in a three-dimensional (3D) scene during self-motion. Our findings establish that scene-relative object motion can confound perceptual judgements of depth during self-motion.

These biases were large when the object was viewed monocularly, and were greatly reduced, but not eliminated, when binocular disparity cues were provided. This pattern of biases is expected if subjects confound image motion due to self-motion with that due to scene-relative object motion. Subjects showed a far bias when object and observer moved in the same direction, and a near bias when object and observer moved in opposite directions. A target object could be either stationary or moving laterally at different velocities, and subjects were asked to judge the depth of the object relative to the plane of fixation. Naïve human subjects viewed a virtual 3D scene consisting of a ground plane and stationary background objects, while lateral self-motion was simulated by optic flow. Thus, it is unknown whether perceived depth based on motion parallax is biased by object motion relative to the scene. Previous experimental and theoretical work on perception of depth from motion parallax has assumed that objects are stationary in the world. To correctly compute depth from motion parallax, only the component of image motion caused by self-motion should be used by the brain. However, if an object moves relative to the scene, this complicates the computation of depth from motion parallax since there will be an additional component of image motion related to scene-relative object motion. During observer translation, the relative image motion of stationary objects at different distances (motion parallax) provides potent depth information. An important function of the visual system is to represent 3D scene structure from a sequence of 2D images projected onto the retinae.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed